thoughts(security): raising a toddler, the panic/calm cycle, and adopting new data + AI platforms for proof of concepts

Introducing a very short data + AI platform security risk framework to use when adopting new platforms during proof of concepts

I promise I’m going to try my best to build a bridge from this personal opening to technical content without getting to much into: “what raising a toddler taught me about B2B sales” territory, bear with me.

I’m a new parent. My daughter just turned eighteen months old, which means she’s newly graduated from a tiny potato to a little person. I’m stopping myself from writing too much on how rewarding this journey has been, but I’ll just say how much more colorful life has been this last year and a half.

What I’m starting to pick-up on this odyssey of parenthood is that as she grows, so do my wife and I. When she was first born our heads were spinning on everything we had to do and learn, but looking back it’s almost like we were in these simple times and didn’t even realize it because it was just so new.

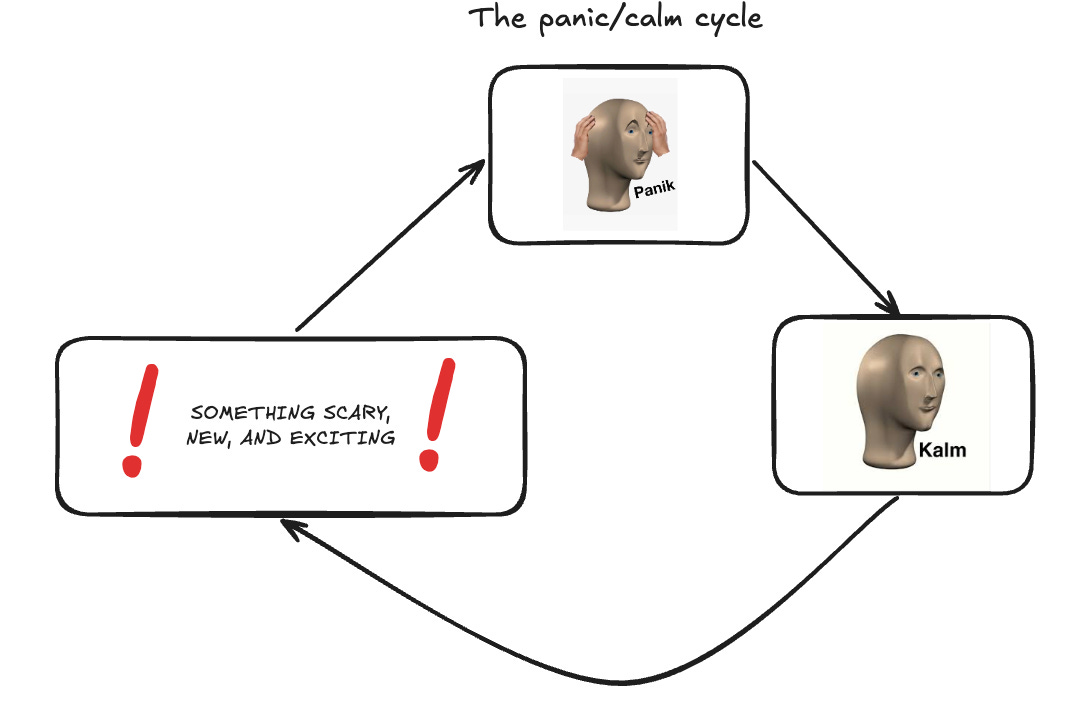

Every few days, weeks, or months went something like: something new, scary, and exciting → panic → calm → repeat.

As soon as she started eating solid foods, it repeated. As soon as she started walking the cycle repeated. In this whirlwind of childhood development, it’s hard to realize how much you’ve grown as a parent just by being in the eye of the storm.

Seeing as this has been my most dramatic life experience so far, it made me stop and think how this panic and calm cycle is everywhere.

Something scary, new, and exciting brings risks, risks that are new to us that cause us to panic, we then adapt to those risks and calm ensues, and the cycle repeats.

I opened up the blog post saying I’m a new parent, so clearly this isn’t heading toward me imparting some form of parental wisdom.

Actually the opposite, please send me your best toddler parenting tips.

Instead, I want to apply this cycle to a demographic that I’ve worked with for countless hours and across hundreds of organizations: security teams adopting data + AI platforms for the first time during proof of concepts / pilots / evaluation phase.

There’s the bridge by the way, I had to do it at some point.

NOTE: Moving forward I’ll be using proof of concepts, pilots, and evaluations interchangeably and platforms as shorthand for data + AI platforms.

I don’t know if there are enough fingers and toes in a three-mile radius to count the number of times I’ve seen the following scenario play out:

A team wants to bring in a data + AI platform for a use case

A team wants to use real company data in their pilot

A team asks security team to approve the vendor and datasets

Pilot is scheduled to start on Monday by the way

Panic cycle begins for the security team.

A third-party risk questionnaire and asking for compliance documentation is a normal starting line for evaluating any SaaS vendor, but for a platform with this much flexibility and power? It can’t be close to where it ends, because relevant security questions will just start pouring out.

How is data stored? How is it encrypted at rest and in transit? How do users get access? Can the vendor access the data? Are there third-party AI models? Is everything in our region? Where does the compute run? How does the compute run? Can we process PCI? Can we use our monitoring? Do they have monitoring?

It is at this point where I’ve seen security teams reach out about a list of concerns, then the vendor will respond back with relevant documentation to help settle their nerves. But, in the wrong context, sending over documentation can feel like telling someone who is upset to, “just calm down.”

I’m absolutely guilty of throwing documentation over the wall, by the way. It’s just a common industry way of operating that rightfully should be challenged. But we need to realize that entering that calm phase for the security team will only come with deep understanding of the new, scary, and exciting platform not just from walls of text.

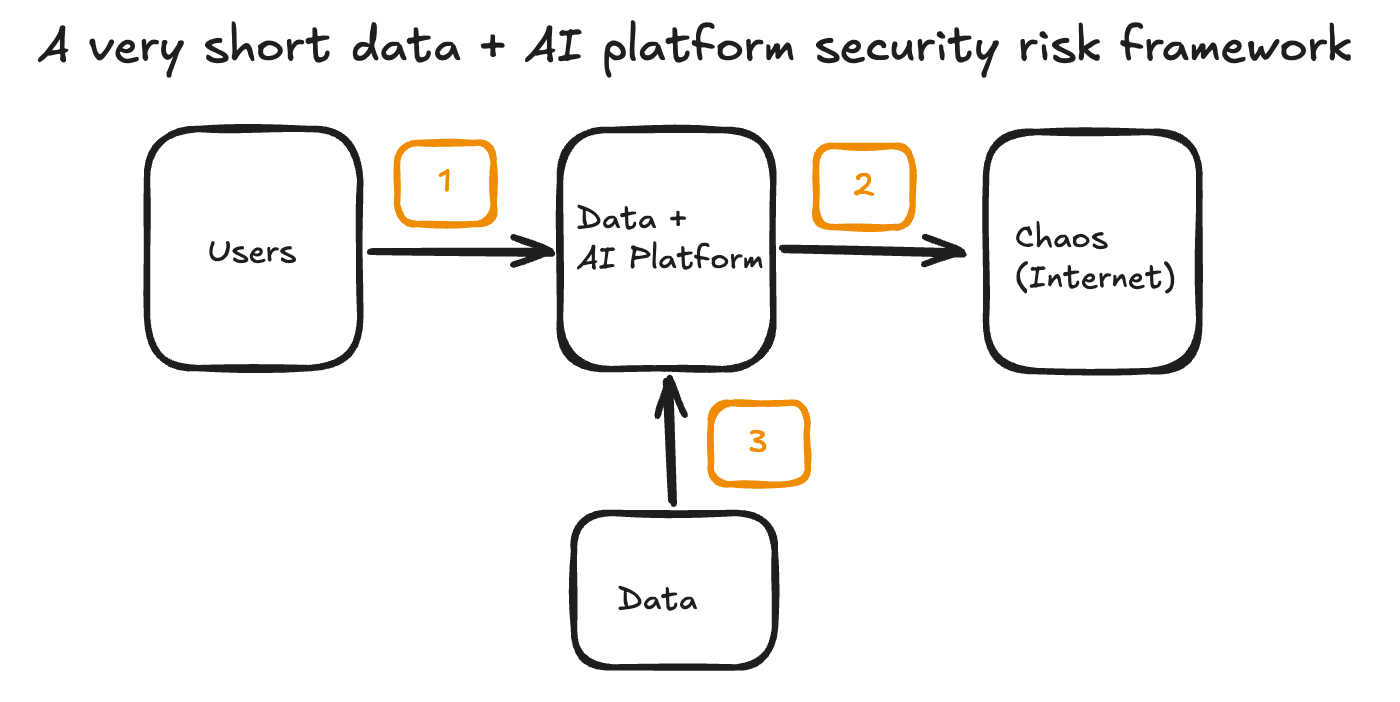

To expedite that deep understanding, I’m proposing a very short data + AI platform security risk framework, to help security teams get to that calm state faster when evaluating data + AI platforms.

The goal of this framework is that if you address and understand the context around these three controls, then it will put your organization in a good position to unlock pilots quickly giving users the chance to perform real and impactful testing.

By nature of the very memorable name: a very short data + AI platform security risk framework, it’s not meant for all risks that come with the data and AI territory, it’s specifically oriented on simple controls for big rock security risks.

Remember, we just need to get through this first panic/calm cycle to move forward. So, let’s go through each of the controls of VSDAPSRF (the name is a work in-progress).

First: Restrict all access to corporate networks

Some people might be surprised I don’t lead with SSO/MFA as a control, but to be honest, SSO/MFA is such low hanging fruit that it needs to be table stakes for any platform, most platforms have even removed username/password entirely at this point. We’ve also seen many incidents of malicious actors infiltrating corporate identity-providers through social engineering alone, so it by itself is not a sufficient control in my eyes to address the core risk of malicious outsiders entering the platform.

Which is why the first control that security team needs to do is configure the platform with an allow list from the corporate network and block all other connections. I’m partial to Private Link connections tunneling through a cloud provider, but VPN egress IPs are good as well.

With this first control in place, in addition to SSO/MFA (again, table stakes), you now have a reasonable level of confidence that anyone who gets into the platform will be originating from a company-approved device, a great start.

Second: Cut-off all outbound access to the public internet

Nearly all platforms pretty much function as general compute engines these days. Users can normally write in whichever data-oriented language (e.g. Python, Scala, R, etc.) they’d like and even in SQL-based platforms, driven folks can still usually find a way to wrap-up something like Python in a user-defined function (UDF).

That means that users can do nearly anything. Pull in cool package that turns data frames into dog caricatures, sure! Work with that new AI model, based in a totally different region, they saw on Hacker News? Why not! Accidentally get their credentials phished because they got typo-squatted on a common package name? On the table.

The internet is chaos, cut it off, you’ll feel so much better for it. Then you can build from the back by incorporating private package repositories, Private Link connections to necessary services, etc. and if you really need it, a firewall used it for specific and approved public endpoints only (not all of PyPI).

Third: Limit datasets to those that are: useful for business decisions, used by everyone, or are in need of a new home anyway

With our first two controls covered, we now already know where users are logging in from and that they can’t reach out to the public internet, so what’s next? To give them something to actually evaluate the platform end-to-end with.

This can be the hardest part about any platform evaluation, since it’s the first time in the process you’re crossing that line of actually giving a SaaS vendor access to your data.

It’s like the feeling I had dropping my daughter off at daycare for the first time. I knew that the facility was highly rated and properly accredited, I knew that the teachers were great, and I knew she would love it, but part of me was panicking and yelling, “no, she’ll be safer with you!”

But in the words of Terry Hoitz from The Other Guys:

“I'm a peacock Captain! You gotta let me fly!”

Let’s let users fly.

I do want to be clear. I don’t know your industry as well as you do, I don’t know your specific regulations as well as you do, and I don’t know your datasets as well as you do.

So, instead of me telling you, “this is what data you should use”, here are three evaluation criteria you can use when limiting the first datasets to be added:

Is it actually useful to your users to make business decisions? A knee-jerk reaction would be to think, “let’s just use the sample data on the platform.” But then you remember, you’re an aeronautics company out of the Europe and creating visualizations of New York City taxi data is of absolutely zero value, zero. As a security team, validate that the datasets they want are directly correlated to some business-related outcome.

Can it already be accessed by all different types of data professionals in your organization? This is just day one of using the platform, you can always implement row based access controls and column filters down the line, but the goal here is flying through the first panic/calm cycle like the peacocks we are. Look for datasets that are already accessed through existing corporate tooling and are generally available to the user groups that will be piloting the platform.

Does the data need a new home anyway? If you’re evaluating a platform for the purposes of migrating off of a mainframe or rehoming from another platform all together. These datasets need to be top candidates to add. You wouldn’t buy a TV knowing you can’t watch your favorite channels, same logic applies here.

A note on regulated data: All major data + AI platforms can support the processing of regulated data, if regulated data checks all the boxes of the evaluation criteria I listed above, ensure that you’ve enabled all relevant and vendor recommended controls before ingesting this data.

Limiting your datasets that fit these three criteria will reduce the blast radius of just bringing everything and the kitchen sink for the pilot, but will give your users a real foundation to build from.

There are going to be so many additional controls that you can implement as you grow into your platform for data security from automated data classification, attribute-based access controls, fine-grained access controls, environment isolation, etc. but again, the framework is all about that first panic/calm cycle, there are many more cycles to come.

What about AI in all of this?

A noticeable gap from this framework is risks from AI, since we are talking about data + AI platforms.

Now, I’m not talking about AI capabilities that are explicit actions, e.g. “I’m going to create an AI model.”, I’m talking about implicit actions that users may take in their day-to-day operation like tab finishes, using assistants, etc. Platforms have integrated third-party models into these core functions where the vendor will facilitate end-to-end connectivity to Open AI, Anthropic, Gemini, etc under the hood.

First, I’d recommend having an honest conversation with the vendor about what models are being used, what data is being sent, and during what operations taken by the user. There will be some documentation in the phase, that’s OK, but live conversations are refreshing.

Second, once you have done your due diligence, then have another honest conversation with your business users internally. Ask them, “is this valuable for you to use?”, “will this change your day-to-day work?”, “if this delays the pilot by a week, so we can take a deep dive, is that OK?”. If all those are yes, then you have your answer if you’ll need to include it in the pilot or not.

Understanding that this is a happy path, some enterprises might be working with a DENY ALL policy from day one on non-approved third-party models, and that’s OK, make sure to just talk to your vendor in that case.

AI capabilities right now is for you to judge based on the vendor’s controls, your existing policies, the value to your users, and comfort level at this stage of the platform pilot.

Are we starting to feel calmer?

Having three core controls to provide us a deep understanding of who’s coming in, what’s going out, and what data is accessible will start to drift us down from panic mode to a new calm state, so that the evaluation can continue on a respectable timeline with our core security controls in place.

There are so many more risks and controls on the horizon when adopting any data + AI platform, but we can only face what’s immediately ahead of us. I know at some point my daughter is going to want to drive (when she’s 30), but I’m going to put that out of my mind for now and focus on the here and now, like making sure she’s not putting peanut butter toast in her hair.

I challenge you to try and do the same when you’re evaluating the security model of data and AI platforms, control for the big rock risks to get teams moving in the right direction to make hard and core business decisions quickly, then build on top of that.

JD

General notice: Opinions expressed are solely my own and do not express the views or opinions of my employer.

AI Notice: To maintain my voice and original intent throughout the article no AI was used for brainstorming, content creation, content validation, flow, or otherwise.